A recent medical application project demonstrates the extreme challenges of ultra-low latency design. The system required end-to-end latency of just 500 nanoseconds for an EtherCAT industrial Ethernet implementation—a requirement that pushed the boundaries of what’s technically feasible.

The project faced immediate constraints from the Ethernet interface itself. Standard 100 Mbps Ethernet with a 4-bit parallel interface operates at 25 MHz, meaning each clock cycle consumes 40 nanoseconds. With only 500 nanoseconds total budget, the system had just 13 clock cycles to complete all processing—an extremely tight constraint.

The challenge intensified when considering that external Ethernet interface chips consumed more than half the latency budget through their own internal processing delays. This left only a few clock cycles for the actual FPGA processing, forcing the design team to optimize every aspect of the system architecture.

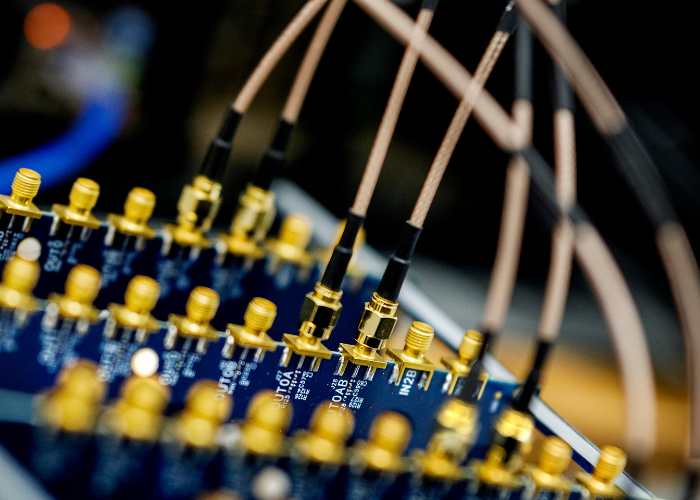

The solution required sophisticated clock management and synchronization techniques. Rather than using standard synchronization protocols that typically require 2 clock cycles (80 nanoseconds of precious budget), our team designed custom clock distribution systems that delivered pre-synchronized data to the FPGA processing logic.

This project exemplifies how ultra-low latency design extends beyond the FPGA itself to encompass complete system architecture, including external component selection, clock distribution, and interface timing optimization.